Dear Steve Lemay

The internet is celebrating the news that Alan Dye, Apple’s head of design, is leaving

In Norton Commander, as well as in Windows Explorer it’s always been the norm that folders go first, then files

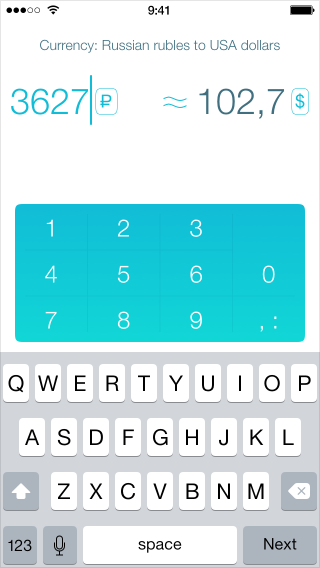

How much can a design change when adapting it for mobile?

New email apps keep coming out, trying to organize your inbox. Folders in email were invented nearly fifty years ago, and since then we’ve had filters

Reversibility is a property of an interface input control, where the user can return the control to its initial state at any time

Duolingo used to be a great app. I opened in to practice almost every day. But then they changed the design

Earlier

Ctrl + ↓